Last week at the SMX East conference in New York, I both sat in on sessions concerned with Google’s Panda algorithm updates and spoke on one of them. One thing which really struck me is how extraordinarily unified fellow search marketing experts were about both the causes and solutions to sites which were impacted by Panda! Each marketer spoke about improving sites’ quality, usability, and overall user experience (“UX”).

For those of us who have been following Google’s evolution over time, the Panda updates actually weren’t all that surprising. For me, the emergence of Panda seemed very familiar, harkening back to perhaps as far back as 2006 when Google clamped down on affiliate sites. At that same time, Henk van Ess revealed how Google was hiring on temporary quality evaluation staff to rate search results. In Google internal documents which van Ess exposed, the evaluators were instructed to give poor ratings to spam content, porn ranking on inappropriate keyword phrases, and “thin affiliate content”. It became clear very quickly that the negative human ratings for “thin affiliate content” were related quite closely to the virtual penalization that many affiliate sites experienced at that time.

What Google was focusing upon in reducing the ratings of “thin affiliates” were instances where a search results page would be filled up with links to pages which all had virtually the same content, and where those pages often weren’t the final destinations of the people who landed upon them (obviously, with most affiliate sites one clicks-through to the actual retailer’s site where more information could be found and orders could be placed). From Google’s perspective, it was a poor user experience for there to be millions of pages indexed which had all essentially identical content and which often edged out other more-worthwhile pages which consumers might prefer.

From all of the information around the “Panda” Updates, it seems highly likely to me that Google is continuing to leverage their human quality evaluator staff, along with a number of other automated metrics which they could also incorporate in determining quality of pages. For instance, the numbers of people clicking back out of a page they found in the search results in order to select another page — this sort of a bounce rate metric could indicate which a page is of very poor quality for a particular keyword term.

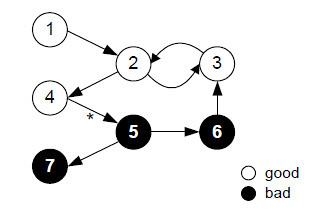

Naturally, there are far more pages on the internet than what Google may reasonably have evaluators visit and assess, but there have also been developments in methods for using small numbers of ratings to be algorithmically applied across larger numbers of websites and pages. The TrustRank analysis technique is just one of these methods, and the research paper describing it shows how one could use a small sample set of rated webpages in combination with an automated analysis of the link graph associated with those pages in order to broadly apply ranking decisions to good content or poor content.

The combination of human interaction metrics is likely used by Google to determine a sort of “quality score” for pages, and some sort of mechanism similar to the TrustRank method is used to apply the quality score values across a broad swath of a site’s sections and pages.

While the Panda Updates were initially targeting “content farms”, or sites which specifically generated large numbers of pages to target user search queries, the criteria used to ding them could easily wreck many other types as well. Poorly constructed sites where users are confused about where to find what they were searching-for, or sites which make a bad impression by being too crammed full of ads, tricky links, or unsophisticated layouts might also fall under the treads of Panda.

The leaked evaluator documents from Google gave a few ideas of the sorts of things which could decide between a “thin affiliate” that got bad ratings versus sites which happened to contain affiliate content but which might otherwise get good ratings. Having additional content on the pages, particularly “value-added” content such as maps or user ratings or price comparisons could make a difference.

Here in 2011, I’d say the bar is even higher, though. You want your site to make a good impression when a searcher lands upon it, and you want them to have trust in your content. You need the site to be usable so that it doesn’t frustrate users, and you need to seriously consider removing impediments which annoy or hamper users in getting what they’re seeking. That gigantic interstitial ad that blocks them from the page, or all the cluttered fineprint and links may result in higher bounce rates which will translate into lower rankings for you.

In the past year, Google introduced Page Speed as a ranking factor — a major element which impacts consumer satisfaction with webpages. In their blog posts about Google they mention improving a number of elements affecting quality of a sites, including spelling and grammar (we previously highlighted how Google could use spelling and grammar in quality determinations and rankings). With the increasing attention to user experience factors in ranking determinations, it has become clear that if you’re not doing usability analysis, you may not be doing SEO at all.

Search engine optimization based purely upon clever technical tricks really appears to be on the wane with the Panda Updates. SEO may really decline in favor of much more sustainable longterm attention to User Experience and Usability factors. Unfortunately, I don’t see a whole lot of websites or companies positioned to take advantage of the trends. Most of the companies I’ve consulted with continue to base site design decisions more heavily upon arbitrary egos, expediency, and mere immitation of their competition rather than upon informed UX testing.